Dify is a powerful open-source LLM application development platform, but like any complex system, it can sometimes return an Internal Server Error—often shown as HTTP 500. When this happens, it can disrupt workflows, impact users, and create uncertainty about what went wrong. The good news is that most Dify internal server errors are traceable to configuration issues, dependency failures, or infrastructure limitations, and they can be fixed with a systematic approach.

TLDR: A Dify internal server error (HTTP 500) is usually caused by misconfiguration, service dependency issues, database failures, or resource limits. Start by reviewing container logs and environment variables, then verify database and Redis connections. Check API keys, reverse proxy settings, and server resources. In most cases, careful log analysis and configuration validation will resolve the issue quickly.

Understanding What an Internal Server Error Means

An HTTP 500 Internal Server Error indicates that the server encountered an unexpected condition that prevented it from fulfilling a request. Unlike client-side errors (such as 404 or 403), this error originates on the server.

In Dify, this typically means:

- Backend services failed to start or connect

- Database or Redis is unreachable

- Environment variables are misconfigured

- Third-party API calls (e.g., OpenAI, Azure) are failing

- Server resource exhaustion (CPU, memory, disk)

Before making changes, it is important to identify exactly where the failure is occurring.

Step 1: Check Dify Application Logs

The fastest way to diagnose an internal server error is by reviewing logs. If you are using Docker (which most Dify installations do), run:

docker compose logs -f

Or inspect a specific container:

docker logs <container_name>

Look for:

- Stack traces

- Database connection errors

- Redis connection failures

- Authentication or API key errors

Logs typically reveal whether the problem stems from configuration, network connectivity, or an upstream API failure.

Step 2: Verify Environment Variables

Many Dify internal server errors occur because of incorrect environment variables in the .env file.

Pay close attention to:

- DATABASE_URL

- REDIS_URL

- OPENAI_API_KEY or other model provider keys

- SECRET_KEY

- APP_ENV

Common mistakes include:

- Mis-typed credentials

- Wrong port numbers

- Missing quotation marks

- Expired API keys

After modifying your .env file, restart all services:

docker compose down docker compose up -d

Failure to restart services means changes will not take effect.

Step 3: Confirm Database and Redis Connectivity

Dify depends heavily on PostgreSQL and Redis. If either service fails, the application may throw an internal server error.

To test PostgreSQL:

docker exec -it <postgres_container> psql -U postgres

To test Redis:

docker exec -it <redis_container> redis-cli ping

You should see:

- Database prompt for PostgreSQL

- PONG response from Redis

If not:

- Check container status with

docker ps - Check for port conflicts

- Ensure no firewall is blocking communication

Step 4: Inspect Reverse Proxy and SSL Configuration

If you are deploying Dify behind Nginx, Apache, or a cloud load balancer, misconfigurations can trigger HTTP 500 errors.

Common proxy issues include:

- Incorrect upstream server address

- Timeout settings too low

- SSL certificate misconfiguration

- Incorrect

proxy_passdirective

Verify:

- Correct port mapping

- Valid SSL certificate

- Proper header forwarding (

Host,X-Forwarded-For)

Check Nginx error logs:

tail -f /var/log/nginx/error.log

Step 5: Evaluate API Provider Errors

Dify connects to LLM providers such as OpenAI or Azure OpenAI. If the external provider returns errors, your interface may display an internal server error.

Look for:

- Rate limit exceeded

- Quota exhausted

- Invalid API key

- Region misconfiguration

Log messages often contain detailed provider responses. Check your provider dashboard for usage limits and billing issues.

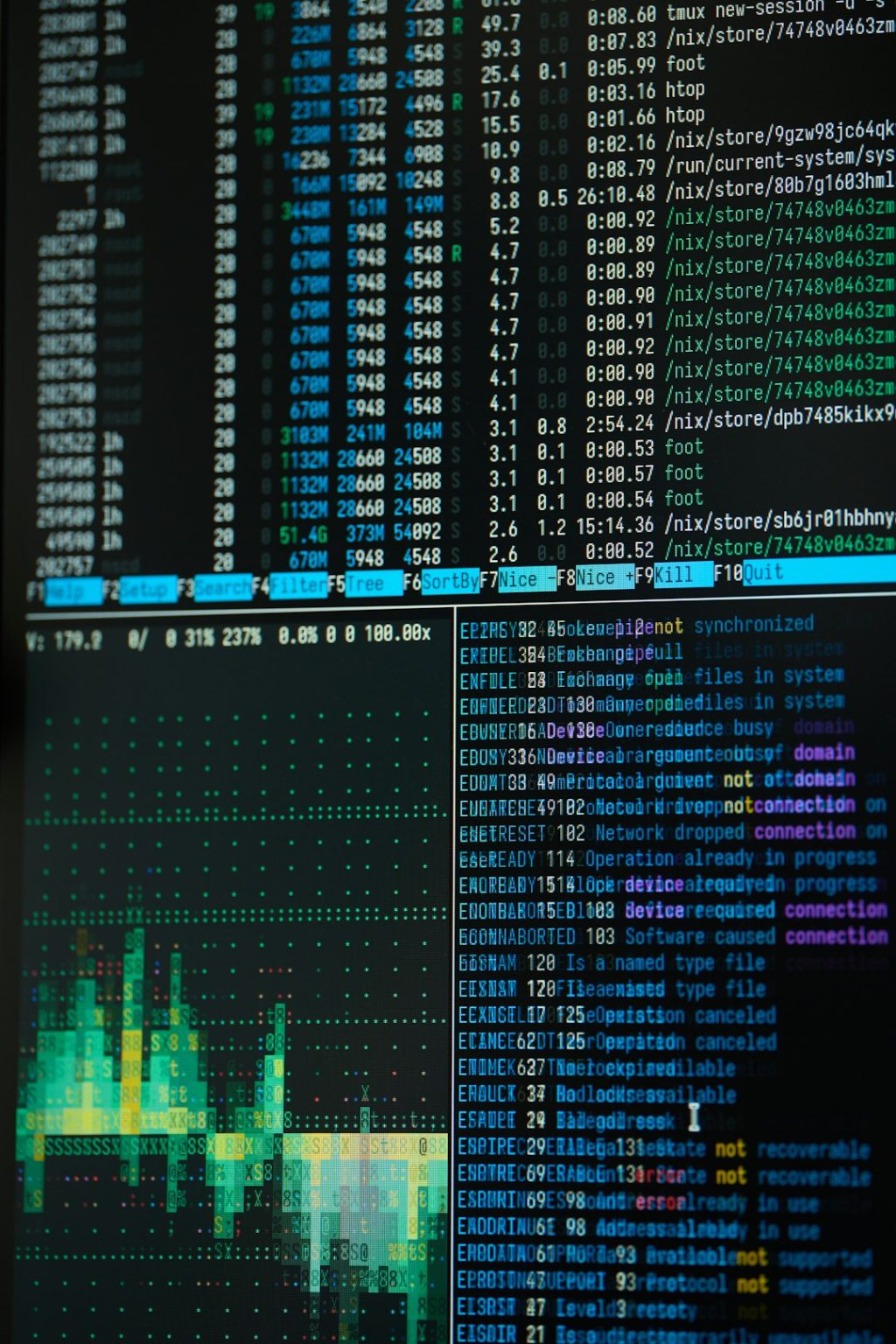

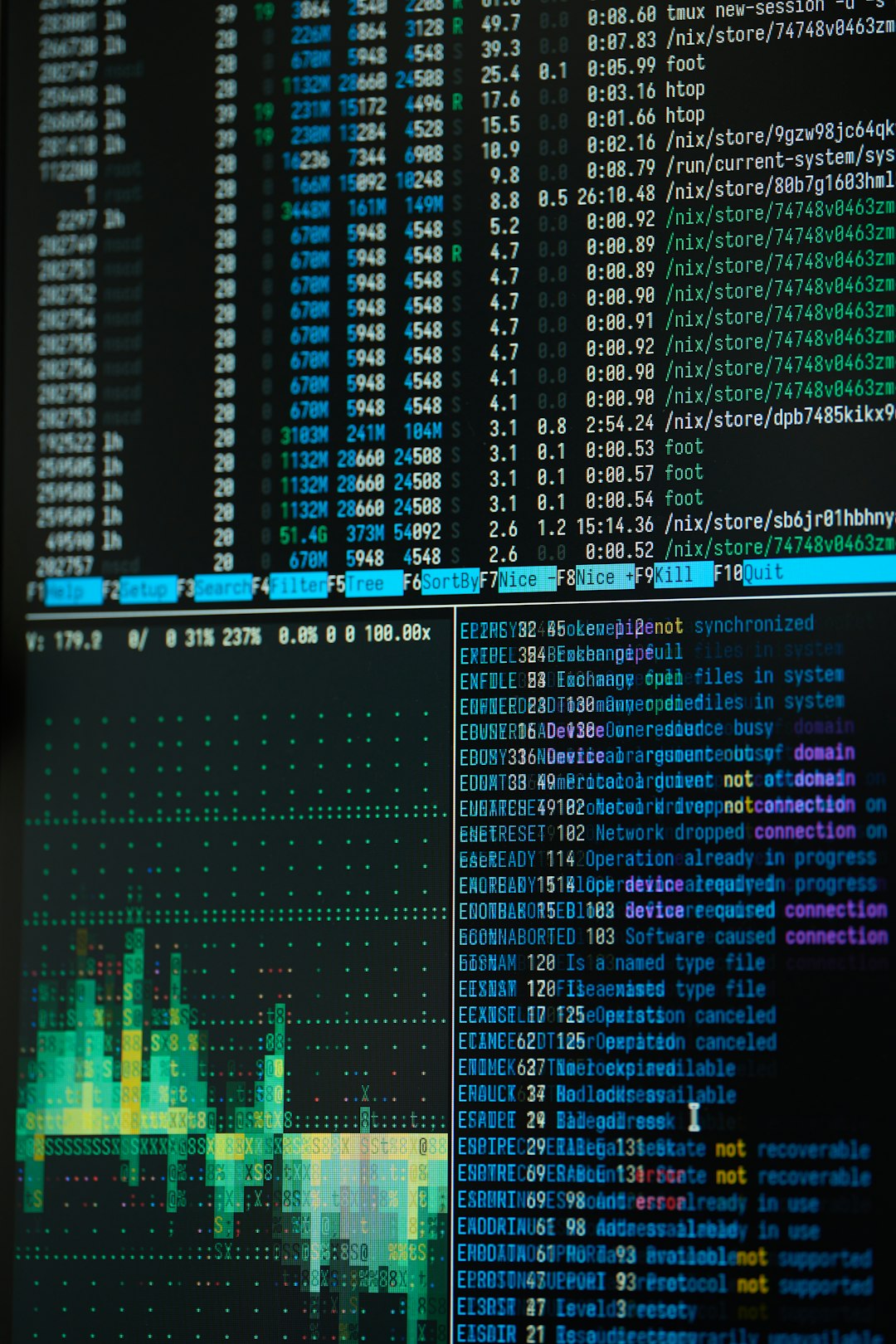

Step 6: Check Server Resource Usage

Low system resources can cause backend services to crash unexpectedly.

Run:

top htop df -h free -m

Watch for:

- High CPU usage (above 90%)

- Memory exhaustion

- Disk full errors

If resources are constrained:

- Upgrade server plan

- Increase swap memory

- Clean unused Docker images

docker system prune

Common Causes and Quick Fix Reference

| Issue | Symptoms | Fix |

|---|---|---|

| Database connection failure | HTTP 500 on all requests | Verify DATABASE_URL and restart PostgreSQL |

| Redis failure | Session errors, task failure | Restart Redis container |

| Invalid API key | Model execution fails | Update provider credentials |

| Proxy misconfiguration | Error after enabling SSL | Review Nginx settings |

| Low memory | Container crashes | Upgrade server resources |

Advanced Debugging Techniques

If standard troubleshooting does not resolve the issue:

- Enable verbose logging

- Run Dify in development mode

- Test API calls with curl or Postman

- Inspect network routes between containers

Example:

curl http://localhost:3000/health

If the health endpoint fails, backend service configuration needs attention.

When to Reinstall Dify

In rare cases, corrupted containers or volume data may cause persistent failures. A clean reinstall can help:

docker compose down -v docker compose pull docker compose up -d

Warning: Back up your database before removing volumes.

Preventing Future Internal Server Errors

Prevention is as important as troubleshooting.

Best practices include:

- Regularly updating Dify and dependencies

- Monitoring server metrics

- Using structured logging tools

- Setting up uptime monitoring

- Testing configuration changes in staging

Automated alerts can notify you before minor issues escalate into system-wide failures.

Final Thoughts

A Dify internal server error can appear intimidating, but in most cases it is a solvable infrastructure or configuration issue. The key is a disciplined approach: review logs, verify environment variables, confirm service connectivity, and check system resources. Avoid guessing or making multiple changes at once; instead, diagnose methodically and apply one fix at a time.

With careful investigation and consistent maintenance practices, Dify can run reliably and at scale—without recurring internal server disruptions.